模型可解释性与集成梯度

作者: A_K_Nain

创建日期: 2020/06/02

最后修改: 2020/06/02

描述: 如何为分类模型获取集成梯度。

集成梯度

集成梯度是一种将分类模型的预测归因于其输入特征的技术。这是一种模型可解释性技术:您可以使用它来可视化输入特征与模型预测之间的关系。

集成梯度是计算预测输出与输入特征的梯度的一种变体。要计算集成梯度,我们需要执行以下步骤:

-

确定输入和输出。在我们的例子中,输入是图像,输出是我们模型的最后一层(具有softmax激活的全连接层)。

-

计算在对特定数据点进行预测时,哪些特征对神经网络是重要的。要识别这些特征,我们需要选择一个基线输入。基线输入可以是全黑图像(所有像素值设置为零)或随机噪声。基线输入的形状需要与我们的输入图像相同,例如 (299, 299, 3)。

-

对给定的步数进行基线插值。步数表示在给定输入图像的梯度近似中所需的步骤数。步数是一个超参数。作者建议使用20到1000步之间的任何值。

-

对这些插值图像进行预处理并进行正向传递。

- 获取这些插值图像的梯度。

- 使用梯形规则近似梯度的积分。

要深入了解集成梯度及其工作原理,建议阅读这篇优秀的文章。

参考文献:

设置

import numpy as np

import matplotlib.pyplot as plt

from scipy import ndimage

from IPython.display import Image, display

import tensorflow as tf

import keras

from keras import layers

from keras.applications import xception

# 输入图像的大小

img_size = (299, 299, 3)

# 加载带有imagenet权重的Xception模型

model = xception.Xception(weights="imagenet")

# 目标图像的本地路径

img_path = keras.utils.get_file("elephant.jpg", "https://i.imgur.com/Bvro0YD.png")

display(Image(img_path))

集成梯度算法

def get_img_array(img_path, size=(299, 299)):

# `img` 是一个大小为 299x299 的 PIL 图像

img = keras.utils.load_img(img_path, target_size=size)

# `array` 是一个形状为 (299, 299, 3) 的 float32 Numpy 数组

array = keras.utils.img_to_array(img)

# 我们添加一个维度将数组转变为大小为 (1, 299, 299, 3) 的 "批次"

array = np.expand_dims(array, axis=0)

return array

def get_gradients(img_input, top_pred_idx):

"""计算输出相对于输入图像的梯度。

Args:

img_input: 4D 图像张量

top_pred_idx: 输入图像的预测标签

Returns:

相对于 img_input 的预测梯度

"""

images = tf.cast(img_input, tf.float32)

with tf.GradientTape() as tape:

tape.watch(images)

preds = model(images)

top_class = preds[:, top_pred_idx]

grads = tape.gradient(top_class, images)

return grads

def get_integrated_gradients(img_input, top_pred_idx, baseline=None, num_steps=50):

"""计算预测标签的集成梯度。

Args:

img_input (ndarray): 原始图像

top_pred_idx: 输入图像的预测标签

baseline (ndarray): 用于插值的基准图像

num_steps: 在基准和输入之间进行插值的步数

用于计算集成梯度。这些步长决定了积分近似误差。默认情况下,

num_steps 设置为 50。

Returns:

相对于输入图像的集成梯度

"""

# 如果没有提供基准,就从一张与输入图像同大小的黑图像开始。

if baseline is None:

baseline = np.zeros(img_size).astype(np.float32)

else:

baseline = baseline.astype(np.float32)

# 1. 进行插值。

img_input = img_input.astype(np.float32)

interpolated_image = [

baseline + (step / num_steps) * (img_input - baseline)

for step in range(num_steps + 1)

]

interpolated_image = np.array(interpolated_image).astype(np.float32)

# 2. 对插值图像进行预处理

interpolated_image = xception.preprocess_input(interpolated_image)

# 3. 获取梯度

grads = []

for i, img in enumerate(interpolated_image):

img = tf.expand_dims(img, axis=0)

grad = get_gradients(img, top_pred_idx=top_pred_idx)

grads.append(grad[0])

grads = tf.convert_to_tensor(grads, dtype=tf.float32)

# 4. 使用梯形法则近似积分

grads = (grads[:-1] + grads[1:]) / 2.0

avg_grads = tf.reduce_mean(grads, axis=0)

# 5. 计算集成梯度并返回

integrated_grads = (img_input - baseline) * avg_grads

return integrated_grads

def random_baseline_integrated_gradients(

img_input, top_pred_idx, num_steps=50, num_runs=2

):

"""生成多个随机基准图像。

Args:

img_input (ndarray): 3D 图像

top_pred_idx: 输入图像的预测标签

num_steps: 在基准和输入之间进行插值的步数

用于计算集成梯度。这些步长决定了积分近似误差。默认情况下,

num_steps 设置为 50。

num_runs: 要生成的基准图像数量

Returns:

用于 `num_runs` 基准图像的平均集成梯度

"""

# 1. 用于跟踪所有图像的集成梯度(IG)的列表

integrated_grads = []

# 2. 获取所有基准的集成梯度

for run in range(num_runs):

baseline = np.random.random(img_size) * 255

igrads = get_integrated_gradients(

img_input=img_input,

top_pred_idx=top_pred_idx,

baseline=baseline,

num_steps=num_steps,

)

integrated_grads.append(igrads)

# 3. 返回图像的平均集成梯度

integrated_grads = tf.convert_to_tensor(integrated_grads)

return tf.reduce_mean(integrated_grads, axis=0)

用于可视化梯度和积分梯度的辅助类

class GradVisualizer:

"""Plot gradients of the outputs w.r.t an input image."""

def __init__(self, positive_channel=None, negative_channel=None):

if positive_channel is None:

self.positive_channel = [0, 255, 0]

else:

self.positive_channel = positive_channel

if negative_channel is None:

self.negative_channel = [255, 0, 0]

else:

self.negative_channel = negative_channel

def apply_polarity(self, attributions, polarity):

if polarity == "positive":

return np.clip(attributions, 0, 1)

else:

return np.clip(attributions, -1, 0)

def apply_linear_transformation(

self,

attributions,

clip_above_percentile=99.9,

clip_below_percentile=70.0,

lower_end=0.2,

):

# 1. Get the thresholds

m = self.get_thresholded_attributions(

attributions, percentage=100 - clip_above_percentile

)

e = self.get_thresholded_attributions(

attributions, percentage=100 - clip_below_percentile

)

# 2. Transform the attributions by a linear function f(x) = a*x + b such that

# f(m) = 1.0 and f(e) = lower_end

transformed_attributions = (1 - lower_end) * (np.abs(attributions) - e) / (

m - e

) + lower_end

# 3. Make sure that the sign of transformed attributions is the same as original attributions

transformed_attributions *= np.sign(attributions)

# 4. Only keep values that are bigger than the lower_end

transformed_attributions *= transformed_attributions >= lower_end

# 5. Clip values and return

transformed_attributions = np.clip(transformed_attributions, 0.0, 1.0)

return transformed_attributions

def get_thresholded_attributions(self, attributions, percentage):

if percentage == 100.0:

return np.min(attributions)

# 1. Flatten the attributions

flatten_attr = attributions.flatten()

# 2. Get the sum of the attributions

total = np.sum(flatten_attr)

# 3. Sort the attributions from largest to smallest.

sorted_attributions = np.sort(np.abs(flatten_attr))[::-1]

# 4. Calculate the percentage of the total sum that each attribution

# and the values about it contribute.

cum_sum = 100.0 * np.cumsum(sorted_attributions) / total

# 5. Threshold the attributions by the percentage

indices_to_consider = np.where(cum_sum >= percentage)[0][0]

# 6. Select the desired attributions and return

attributions = sorted_attributions[indices_to_consider]

return attributions

def binarize(self, attributions, threshold=0.001):

return attributions > threshold

def morphological_cleanup_fn(self, attributions, structure=np.ones((4, 4))):

closed = ndimage.grey_closing(attributions, structure=structure)

opened = ndimage.grey_opening(closed, structure=structure)

return opened

def draw_outlines(

self,

attributions,

percentage=90,

connected_component_structure=np.ones((3, 3)),

):

# 1. Binarize the attributions.

attributions = self.binarize(attributions)

# 2. Fill the gaps

attributions = ndimage.binary_fill_holes(attributions)

# 3. Compute connected components

connected_components, num_comp = ndimage.label(

attributions, structure=connected_component_structure

)

# 4. Sum up the attributions for each component

total = np.sum(attributions[connected_components > 0])

component_sums = []

for comp in range(1, num_comp + 1):

mask = connected_components == comp

component_sum = np.sum(attributions[mask])

component_sums.append((component_sum, mask))

# 5. Compute the percentage of top components to keep

sorted_sums_and_masks = sorted(component_sums, key=lambda x: x[0], reverse=True)

sorted_sums = list(zip(*sorted_sums_and_masks))[0]

cumulative_sorted_sums = np.cumsum(sorted_sums)

cutoff_threshold = percentage * total / 100

cutoff_idx = np.where(cumulative_sorted_sums >= cutoff_threshold)[0][0]

if cutoff_idx > 2:

cutoff_idx = 2

# 6. Set the values for the kept components

border_mask = np.zeros_like(attributions)

for i in range(cutoff_idx + 1):

border_mask[sorted_sums_and_masks[i][1]] = 1

# 7. Make the mask hollow and show only the border

eroded_mask = ndimage.binary_erosion(border_mask, iterations=1)

border_mask[eroded_mask] = 0

# 8. Return the outlined mask

return border_mask

def process_grads(

self,

image,

attributions,

polarity="positive",

clip_above_percentile=99.9,

clip_below_percentile=0,

morphological_cleanup=False,

structure=np.ones((3, 3)),

outlines=False,

outlines_component_percentage=90,

overlay=True,

):

if polarity not in ["positive", "negative"]:

raise ValueError(

f""" Allowed polarity values: 'positive' or 'negative'

but provided {polarity}"""

)

if clip_above_percentile < 0 or clip_above_percentile > 100:

raise ValueError("clip_above_percentile must be in [0, 100]")

if clip_below_percentile < 0 or clip_below_percentile > 100:

raise ValueError("clip_below_percentile must be in [0, 100]")

# 1. Apply polarity

if polarity == "positive":

attributions = self.apply_polarity(attributions, polarity=polarity)

channel = self.positive_channel

else:

attributions = self.apply_polarity(attributions, polarity=polarity)

attributions = np.abs(attributions)

channel = self.negative_channel

# 2. Take average over the channels

attributions = np.average(attributions, axis=2)

# 3. Apply linear transformation to the attributions

attributions = self.apply_linear_transformation(

attributions,

clip_above_percentile=clip_above_percentile,

clip_below_percentile=clip_below_percentile,

lower_end=0.0,

)

# 4. Cleanup

if morphological_cleanup:

attributions = self.morphological_cleanup_fn(

attributions, structure=structure

)

# 5. Draw the outlines

if outlines:

attributions = self.draw_outlines(

attributions, percentage=outlines_component_percentage

)

# 6. Expand the channel axis and convert to RGB

attributions = np.expand_dims(attributions, 2) * channel

# 7.Superimpose on the original image

if overlay:

attributions = np.clip((attributions * 0.8 + image), 0, 255)

return attributions

def visualize(

self,

image,

gradients,

integrated_gradients,

polarity="positive",

clip_above_percentile=99.9,

clip_below_percentile=0,

morphological_cleanup=False,

structure=np.ones((3, 3)),

outlines=False,

outlines_component_percentage=90,

overlay=True,

figsize=(15, 8),

):

# 1. Make two copies of the original image

img1 = np.copy(image)

img2 = np.copy(image)

# 2. Process the normal gradients

grads_attr = self.process_grads(

image=img1,

attributions=gradients,

polarity=polarity,

clip_above_percentile=clip_above_percentile,

clip_below_percentile=clip_below_percentile,

morphological_cleanup=morphological_cleanup,

structure=structure,

outlines=outlines,

outlines_component_percentage=outlines_component_percentage,

overlay=overlay,

)

# 3. Process the integrated gradients

igrads_attr = self.process_grads(

image=img2,

attributions=integrated_gradients,

polarity=polarity,

clip_above_percentile=clip_above_percentile,

clip_below_percentile=clip_below_percentile,

morphological_cleanup=morphological_cleanup,

structure=structure,

outlines=outlines,

outlines_component_percentage=outlines_component_percentage,

overlay=overlay,

)

_, ax = plt.subplots(1, 3, figsize=figsize)

ax[0].imshow(image)

ax[1].imshow(grads_attr.astype(np.uint8))

ax[2].imshow(igrads_attr.astype(np.uint8))

ax[0].set_title("Input")

ax[1].set_title("Normal gradients")

ax[2].set_title("Integrated gradients")

plt.show()

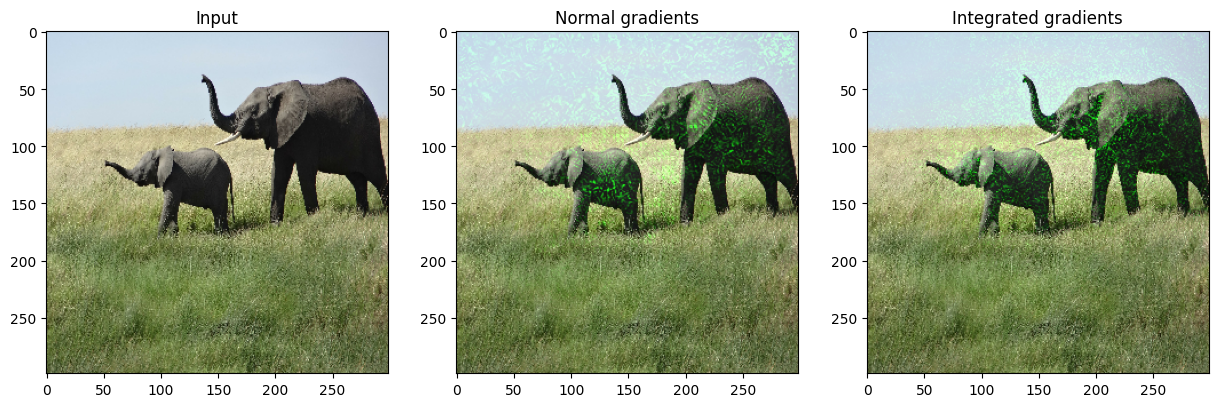

让我们进行测试驱动

# 1. 将图像转换为numpy数组

img = get_img_array(img_path)

# 2. 保留原始图像的副本

orig_img = np.copy(img[0]).astype(np.uint8)

# 3. 对图像进行预处理

img_processed = tf.cast(xception.preprocess_input(img), dtype=tf.float32)

# 4. 获取模型预测

preds = model.predict(img_processed)

top_pred_idx = tf.argmax(preds[0])

print("预测:", top_pred_idx, xception.decode_predictions(preds, top=1)[0])

# 5. 获取预测标签的最后一层梯度

grads = get_gradients(img_processed, top_pred_idx=top_pred_idx)

# 6. 获取集成梯度

igrads = random_baseline_integrated_gradients(

np.copy(orig_img), top_pred_idx=top_pred_idx, num_steps=50, num_runs=2

)

# 7. 处理梯度并绘制

vis = GradVisualizer()

vis.visualize(

image=orig_img,

gradients=grads[0].numpy(),

integrated_gradients=igrads.numpy(),

clip_above_percentile=99,

clip_below_percentile=0,

)

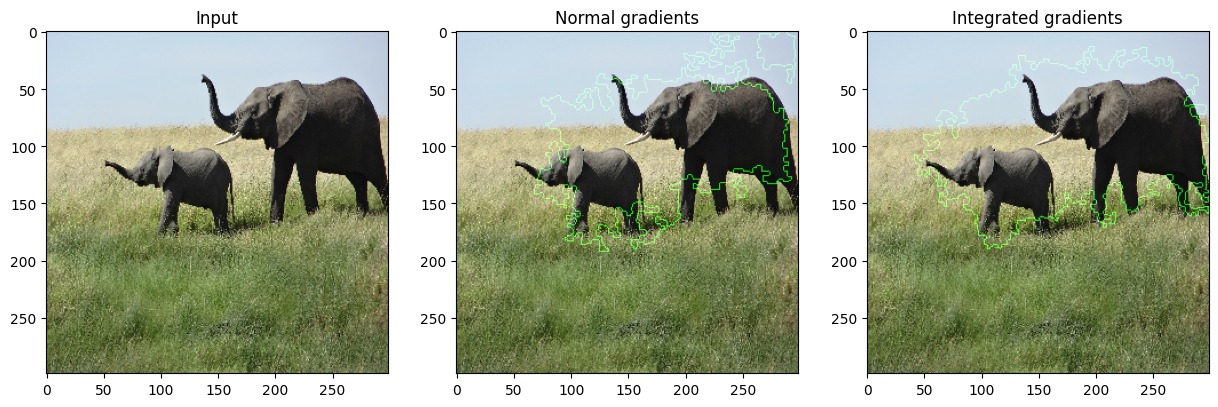

vis.visualize(

image=orig_img,

gradients=grads[0].numpy(),

integrated_gradients=igrads.numpy(),

clip_above_percentile=95,

clip_below_percentile=28,

morphological_cleanup=True,

outlines=True,

)

1/1 ━━━━━━━━━━━━━━━━━━━━ 5s 5s/step

警告: 在调用 absl::InitializeLog() 之前的所有日志消息都写入 STDERR

I0000 00:00:1699486705.534012 86541 device_compiler.h:187] 使用 XLA 编译了集群! 该行在进程的生命周期内最多记录一次。

预测: tf.Tensor(386, shape=(), dtype=int64) [('n02504458', '非洲象', 0.8871446)]