Note

Go to the end to download the full example code. or to run this example in your browser via Binder

使用字典学习进行图像去噪#

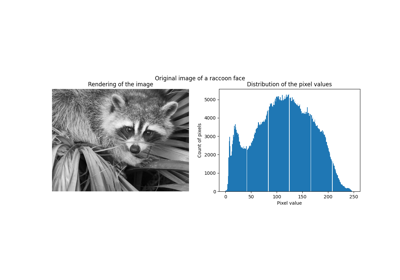

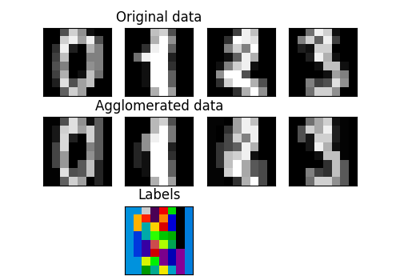

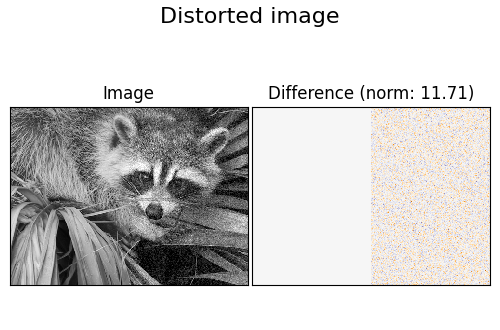

一个比较示例,展示了使用在线 DictionaryLearning 和各种变换方法重建浣熊脸图像的噪声片段的效果。

字典在图像的左半部分失真区域上进行拟合,随后用于重建右半部分。请注意,通过拟合无失真(即无噪声)图像可以获得更好的性能,但这里我们假设无失真图像不可用。

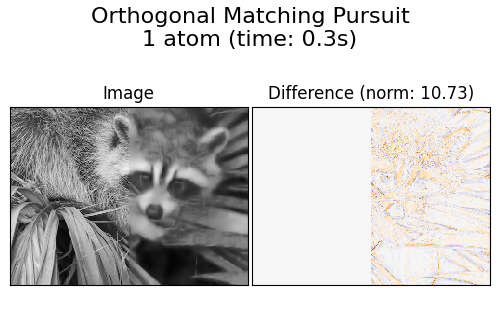

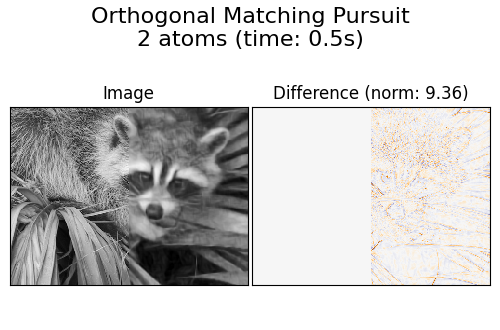

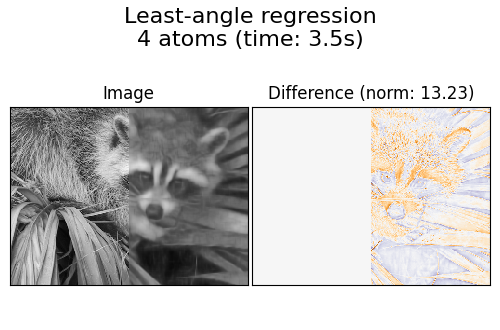

评估图像去噪结果的常见做法是查看重建图像与原始图像之间的差异。如果重建是完美的,这种差异将看起来像高斯噪声。

从图表中可以看出,使用两个非零系数的 正交匹配追踪 (OMP) 结果比仅保留一个系数的结果偏差更小(边缘看起来不那么明显)。此外,在 Frobenius 范数下,它更接近真实值。

最小角回归 的结果偏差更大:差异让人联想到原始图像的局部强度值。

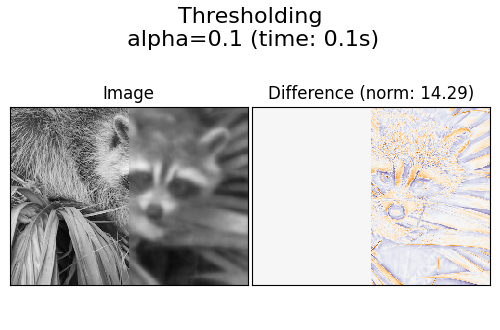

阈值处理显然对去噪没有用,但这里展示它可以以非常高的速度产生有启发性的输出,因此在性能不一定与可视化相关的其他任务(如对象分类)中可能有用。

生成失真图像#

import numpy as np

try: # Scipy >= 1.10

from scipy.datasets import face

except ImportError:

from scipy.misc import face

raccoon_face = face(gray=True)

# 将值在0到255之间的uint8表示转换为值在0到1之间的浮点表示。

raccoon_face = raccoon_face / 255.0

# 降采样以提高速度

raccoon_face = (

raccoon_face[ : :4, ::4]

+ raccoon_face[1 : :4, ::4]

+ raccoon_face[ : :4, 1::4]

+ raccoon_face[1 : :4, 1::4]

)

raccoon_face /= 4.0

height, width = raccoon_face.shape

# 扭曲图像的右半部分

print("Distorting image...")

distorted = raccoon_face.copy()

distorted[:, width // 2 :] += 0.075 * np.random.randn(height, width // 2)

Distorting image...

显示失真图像#

import matplotlib.pyplot as plt

def show_with_diff(image, reference, title):

"""辅助函数用于显示去噪"""

plt.figure(figsize=(5, 3.3))

plt.subplot(1, 2, 1)

plt.title("Image")

plt.imshow(image, vmin=0, vmax=1, cmap=plt.cm.gray, interpolation="nearest")

plt.xticks(())

plt.yticks(())

plt.subplot(1, 2, 2)

difference = image - reference

plt.title("Difference (norm: %.2f)" % np.sqrt(np.sum(difference**2)))

plt.imshow(

difference, vmin=-0.5, vmax=0.5, cmap=plt.cm.PuOr, interpolation="nearest"

)

plt.xticks(())

plt.yticks(())

plt.suptitle(title, size=16)

plt.subplots_adjust(0.02, 0.02, 0.98, 0.79, 0.02, 0.2)

show_with_diff(distorted, raccoon_face, "Distorted image")

提取参考补丁#

from time import time

from sklearn.feature_extraction.image import extract_patches_2d

# 从图像的左半部分提取所有参考块

print("Extracting reference patches...")

t0 = time()

patch_size = (7, 7)

data = extract_patches_2d(distorted[:, : width // 2], patch_size)

data = data.reshape(data.shape[0], -1)

data -= np.mean(data, axis=0)

data /= np.std(data, axis=0)

print(f"{data.shape[0]} patches extracted in %.2fs." % (time() - t0))

Extracting reference patches...

22692 patches extracted in 0.00s.

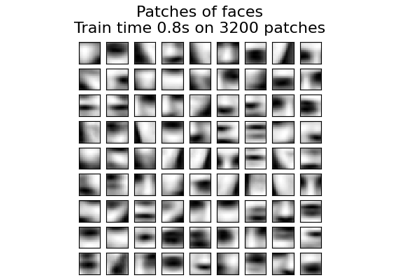

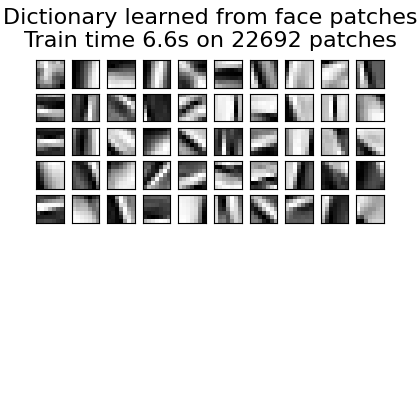

从参考图像块中学习字典#

from sklearn.decomposition import MiniBatchDictionaryLearning

print("Learning the dictionary...")

t0 = time()

dico = MiniBatchDictionaryLearning(

# 增加到300以获得更高质量的结果,但训练时间会变慢。

n_components=50,

batch_size=200,

alpha=1.0,

max_iter=10,

)

V = dico.fit(data).components_

dt = time() - t0

print(f"{dico.n_iter_} iterations / {dico.n_steps_} steps in {dt:.2f}.")

plt.figure(figsize=(4.2, 4))

for i, comp in enumerate(V[:100]):

plt.subplot(10, 10, i + 1)

plt.imshow(comp.reshape(patch_size), cmap=plt.cm.gray_r, interpolation="nearest")

plt.xticks(())

plt.yticks(())

plt.suptitle(

"Dictionary learned from face patches\n"

+ "Train time %.1fs on %d patches" % (dt, len(data)),

fontsize=16,

)

plt.subplots_adjust(0.08, 0.02, 0.92, 0.85, 0.08, 0.23)

Learning the dictionary...

2.0 iterations / 118 steps in 6.62.

提取噪声补丁并使用字典重建它们#

from sklearn.feature_extraction.image import reconstruct_from_patches_2d

print("Extracting noisy patches... ")

t0 = time()

data = extract_patches_2d(distorted[:, width // 2 :], patch_size)

data = data.reshape(data.shape[0], -1)

intercept = np.mean(data, axis=0)

data -= intercept

print("done in %.2fs." % (time() - t0))

transform_algorithms = [

("Orthogonal Matching Pursuit\n1 atom", "omp", {"transform_n_nonzero_coefs": 1}),

("Orthogonal Matching Pursuit\n2 atoms", "omp", {"transform_n_nonzero_coefs": 2}),

("Least-angle regression\n4 atoms", "lars", {"transform_n_nonzero_coefs": 4}),

("Thresholding\n alpha=0.1", "threshold", {"transform_alpha": 0.1}),

]

reconstructions = {}

for title, transform_algorithm, kwargs in transform_algorithms:

print(title + "...")

reconstructions[title] = raccoon_face.copy()

t0 = time()

dico.set_params(transform_algorithm=transform_algorithm, **kwargs)

code = dico.transform(data)

patches = np.dot(code, V)

patches += intercept

patches = patches.reshape(len(data), *patch_size)

if transform_algorithm == "threshold":

patches -= patches.min()

patches /= patches.max()

reconstructions[title][:, width // 2 :] = reconstruct_from_patches_2d(

patches, (height, width // 2)

)

dt = time() - t0

print("done in %.2fs." % dt)

show_with_diff(reconstructions[title], raccoon_face, title + " (time: %.1fs)" % dt)

plt.show()

Extracting noisy patches...

done in 0.00s.

Orthogonal Matching Pursuit

1 atom...

done in 0.26s.

Orthogonal Matching Pursuit

2 atoms...

done in 0.47s.

Least-angle regression

4 atoms...

done in 3.50s.

Thresholding

alpha=0.1...

done in 0.06s.

Total running time of the script: (0 minutes 11.675 seconds)

Related examples