Note

Go to the end to download the full example code. or to run this example in your browser via Binder

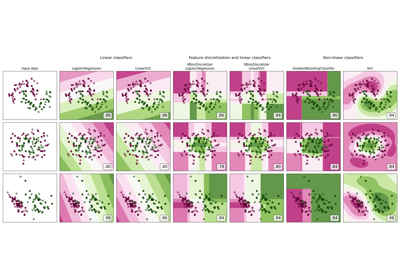

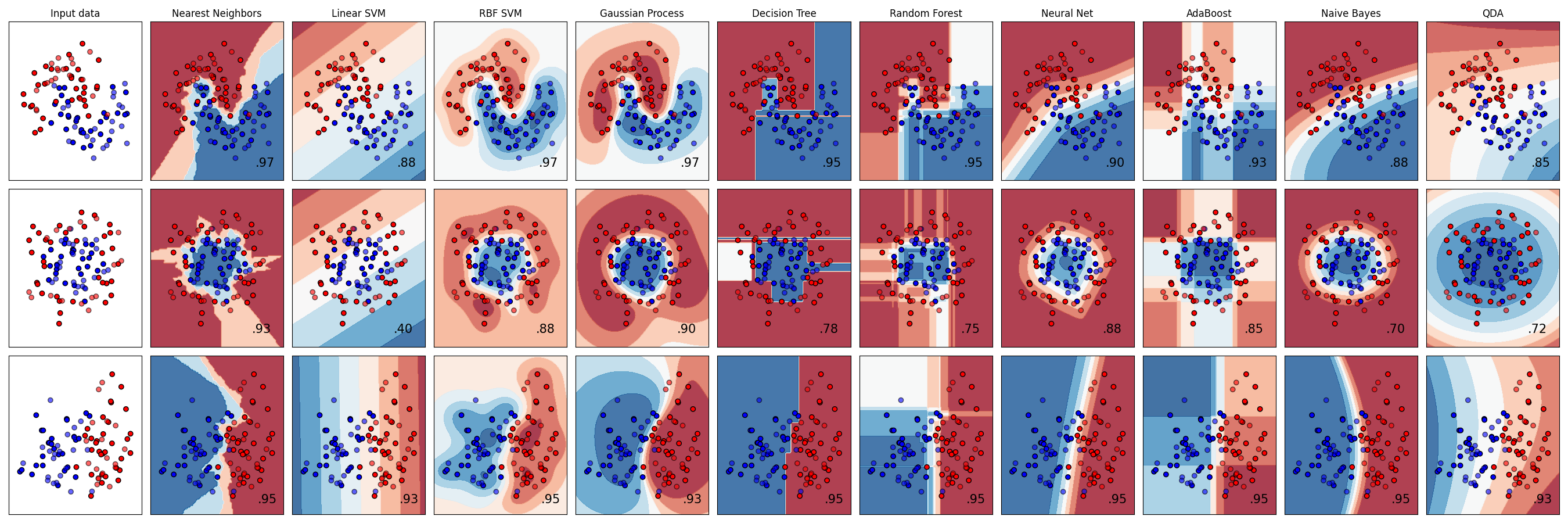

分类器比较#

在scikit-learn中的一些分类器在合成数据集上的比较。 这个示例的目的是为了展示不同分类器的决策边界的性质。 需要谨慎看待这些示例所传达的直观感受,因为这些直观感受不一定适用于真实数据集。

特别是在高维空间中,数据更容易线性分离,像朴素贝叶斯和线性SVM这样的简单分类器可能会比其他分类器更好地实现泛化。

图中显示了训练点(实心颜色)和测试点(半透明颜色)。右下角显示了测试集上的分类准确率。

# 代码来源:Gaël Varoquaux

# Andreas Müller

# 由Jaques Grobler为文档修改

# SPDX许可证标识符:BSD-3-Clause

import matplotlib.pyplot as plt

import numpy as np

from matplotlib.colors import ListedColormap

from sklearn.datasets import make_circles, make_classification, make_moons

from sklearn.discriminant_analysis import QuadraticDiscriminantAnalysis

from sklearn.ensemble import AdaBoostClassifier, RandomForestClassifier

from sklearn.gaussian_process import GaussianProcessClassifier

from sklearn.gaussian_process.kernels import RBF

from sklearn.inspection import DecisionBoundaryDisplay

from sklearn.model_selection import train_test_split

from sklearn.naive_bayes import GaussianNB

from sklearn.neighbors import KNeighborsClassifier

from sklearn.neural_network import MLPClassifier

from sklearn.pipeline import make_pipeline

from sklearn.preprocessing import StandardScaler

from sklearn.svm import SVC

from sklearn.tree import DecisionTreeClassifier

names = [

"Nearest Neighbors",

"Linear SVM",

"RBF SVM",

"Gaussian Process",

"Decision Tree",

"Random Forest",

"Neural Net",

"AdaBoost",

"Naive Bayes",

"QDA",

]

classifiers = [

KNeighborsClassifier(3),

SVC(kernel="linear", C=0.025, random_state=42),

SVC(gamma=2, C=1, random_state=42),

GaussianProcessClassifier(1.0 * RBF(1.0), random_state=42),

DecisionTreeClassifier(max_depth=5, random_state=42),

RandomForestClassifier(

max_depth=5, n_estimators=10, max_features=1, random_state=42

),

MLPClassifier(alpha=1, max_iter=1000, random_state=42),

AdaBoostClassifier(algorithm="SAMME", random_state=42),

GaussianNB(),

QuadraticDiscriminantAnalysis(),

]

X, y = make_classification(

n_features=2, n_redundant=0, n_informative=2, random_state=1, n_clusters_per_class=1

)

rng = np.random.RandomState(2)

X += 2 * rng.uniform(size=X.shape)

linearly_separable = (X, y)

datasets = [

make_moons(noise=0.3, random_state=0),

make_circles(noise=0.2, factor=0.5, random_state=1),

linearly_separable,

]

figure = plt.figure(figsize=(27, 9))

i = 1

# 遍历数据集

for ds_cnt, ds in enumerate(datasets):

# 预处理数据集,分为训练部分和测试部分

X, y = ds

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.4, random_state=42

)

x_min, x_max = X[:, 0].min() - 0.5, X[:, 0].max() + 0.5

y_min, y_max = X[:, 1].min() - 0.5, X[:, 1].max() + 0.5

# 只需先绘制数据集。

cm = plt.cm.RdBu

cm_bright = ListedColormap(["#FF0000", "#0000FF"])

ax = plt.subplot(len(datasets), len(classifiers) + 1, i)

if ds_cnt == 0:

ax.set_title("Input data")

# 绘制训练点

ax.scatter(X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright, edgecolors="k")

# 绘制测试点

ax.scatter(

X_test[:, 0], X_test[:, 1], c=y_test, cmap=cm_bright, alpha=0.6, edgecolors="k"

)

ax.set_xlim(x_min, x_max)

ax.set_ylim(y_min, y_max)

ax.set_xticks(())

ax.set_yticks(())

i += 1

# 遍历分类器

for name, clf in zip(names, classifiers):

ax = plt.subplot(len(datasets), len(classifiers) + 1, i)

clf = make_pipeline(StandardScaler(), clf)

clf.fit(X_train, y_train)

score = clf.score(X_test, y_test)

DecisionBoundaryDisplay.from_estimator(

clf, X, cmap=cm, alpha=0.8, ax=ax, eps=0.5

)

# 绘制训练点

ax.scatter(

X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright, edgecolors="k"

)

# 绘制测试点

ax.scatter(

X_test[:, 0],

X_test[:, 1],

c=y_test,

cmap=cm_bright,

edgecolors="k",

alpha=0.6,

)

ax.set_xlim(x_min, x_max)

ax.set_ylim(y_min, y_max)

ax.set_xticks(())

ax.set_yticks(())

if ds_cnt == 0:

ax.set_title(name)

ax.text(

x_max - 0.3,

y_min + 0.3,

("%.2f" % score).lstrip("0"),

size=15,

horizontalalignment="right",

)

i += 1

plt.tight_layout()

plt.show()

Total running time of the script: (0 minutes 1.112 seconds)

Related examples